Discover how Retrieval-Augmented Generation (RAG) is transforming the way businesses manage, access, and leverage their most valuable asset: information.

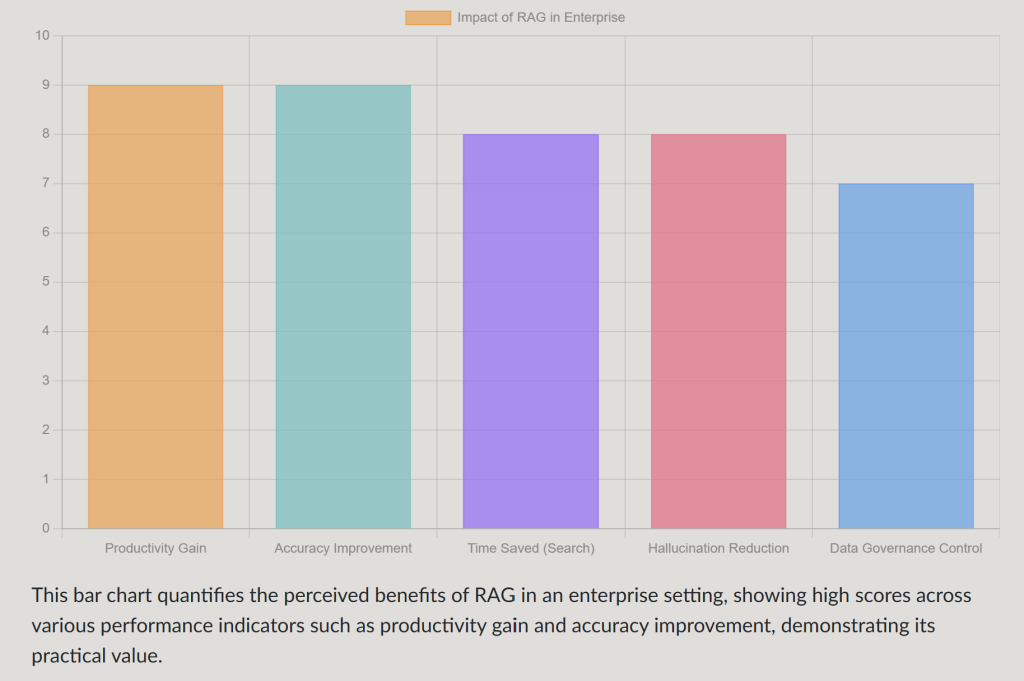

Key Insights into RAG’s Impact

- Bridging the Knowledge Gap: RAG revolutionizes enterprise knowledge management by allowing Large Language Models (LLMs) to access and utilize an organization’s internal, up-to-date data, overcoming the limitations of pre-trained models.

- Enhancing Accuracy and Trust: By grounding AI responses in verifiable, internal documents, RAG significantly reduces hallucinations and ensures precision, fostering greater trust in AI-generated insights.

- Democratizing Information Access: RAG empowers employees across departments with natural language access to comprehensive, accurate information, driving efficiency and better decision-making throughout the enterprise.

In today’s fast-paced business environment, organizations are inundated with data. Yet, this wealth of information often resides in isolated “data silos”—disconnected pockets that hinder access, sharing, and effective utilization. This fragmentation leads to inefficiencies, missed opportunities, and challenges in decision-making. The emergence of Retrieval-Augmented Generation (RAG) is proving to be a revolutionary solution, transforming how enterprises manage their knowledge and paving the way for unified intelligence.

What Exactly is Retrieval-Augmented Generation (RAG)?

A Powerful Fusion of Retrieval and Generation

At its core, RAG is an advanced artificial intelligence technique that combines the strengths of information retrieval systems with the generative capabilities of Large Language Models (LLMs). Unlike traditional LLMs that rely solely on their pre-trained data (which can quickly become outdated or lack specific enterprise context), RAG augments these models by first retrieving relevant, up-to-date information from an organization’s private or internal knowledge bases.

This two-step process ensures that the AI’s generated responses are not only coherent and well-articulated but also factually accurate and deeply rooted in the most current, specific data available to the enterprise. Imagine an AI system that doesn’t just “think” but also “looks up” information from your company’s documents before providing an answer. That’s RAG in action.

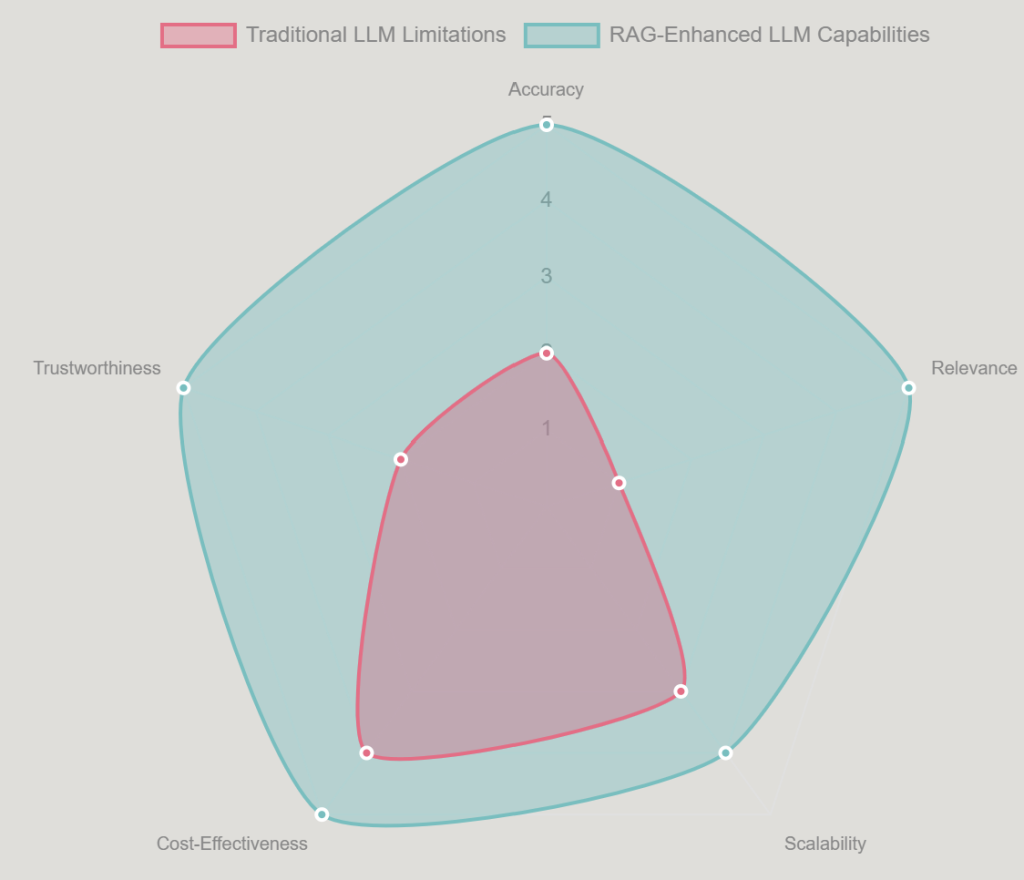

This radar chart illustrates the stark difference in capabilities between traditional LLMs and RAG-enhanced LLMs across critical dimensions for enterprise applications. It highlights how RAG significantly boosts accuracy, relevance, and trustworthiness by leveraging real-time data, providing a compelling visual argument for its adoption in knowledge management.

Why RAG is a Game-Changer for Enterprise Knowledge Management

Breaking Down the Barriers of Disconnected Information

Traditional knowledge management systems often struggle with limitations such as outdated information, fragmented data sources, and the inability to understand complex natural language queries. RAG directly addresses these challenges, offering profound benefits for enterprises:

Enhanced Accuracy and Relevance

By grounding AI responses in an organization’s specific internal documents, policies, and data, RAG ensures that answers are precise and contextually relevant. This drastically reduces the time employees spend searching for information and minimizes the risk of AI “hallucinations” or fabricating facts.

Democratizing Access to Knowledge

RAG-powered systems enable employees to ask questions in natural language and receive clear, sourced answers. This fosters a more self-sufficient workforce and allows for quicker access to critical information across departments, from customer support to engineering teams.

The fragmentation of data into silos hinders unified intelligence within organizations.

Improved Decision-Making

With comprehensive and accurate insights generated by RAG systems, leaders can make more informed, data-driven decisions. This is crucial for strategic planning, operational efficiency, and navigating complex market dynamics.

Scalability and Adaptability

RAG allows LLMs to process information far beyond their initial training data, making them applicable to a wider range of enterprise needs. As new information emerges, knowledge bases can be updated easily and cost-effectively, ensuring the AI remains current without the need for expensive and time-consuming LLM retraining.

Enhanced Data Governance and Compliance

RAG frameworks provide organizations with greater control over which data sources the AI accesses. This is vital for maintaining privacy, ensuring compliance with regulations, and preserving the relevance and integrity of information. Data pipelines can be carefully curated to ensure security and accuracy.

Real-World Impact and Transformative Use Cases

Where RAG is Making a Difference Today

The practical applications of RAG are diverse and span across various industries and business functions:

- Internal Knowledge Management: Employees can instantly find accurate internal documentation, policies, and procedures, dramatically reducing search times and improving productivity. Imagine a new hire quickly finding the exact HR policy or a technical team member instantly accessing a specific product manual.

- Customer Service: RAG empowers customer support teams to provide faster, more accurate resolutions to customer inquiries by pulling up-to-date product details, FAQs, and troubleshooting guides. This leads to higher customer satisfaction and more efficient support operations.

- Research and Development: In research-heavy industries like pharmaceuticals and academia, RAG enables AI to efficiently process and synthesize vast amounts of complex information, accelerating discovery and innovation.

- Content Creation and Marketing: By grounding content in factual, verifiable data, RAG helps streamline the creation of accurate and compelling marketing materials, reports, and internal communications.

- Legal and Compliance: RAG can assist in navigating complex legal databases and compliance documents, ensuring that responses adhere to the latest regulations and internal guidelines.

The Synergy with Knowledge Graphs and Future Architectures

Unlocking Deeper Intelligence with Connected Data

The potential of RAG is further amplified when integrated with technologies like knowledge graphs. Knowledge graphs connect disparate data points and uncover hidden relationships, enabling the AI to reason more deeply and provide even more insightful outputs. This “Graph RAG” approach allows for more deterministic and explainable AI in complex knowledge work, moving beyond simple keyword matching to contextual understanding.

Architectures range from straightforward retrieval-and-generation flows to more advanced systems that incorporate structured data and graph databases. The ongoing evolution of RAG promises increasingly sophisticated methods for grounding AI in enterprise-specific knowledge.

Navigating Implementation: Considerations for Success

Strategic Steps for Adopting RAG

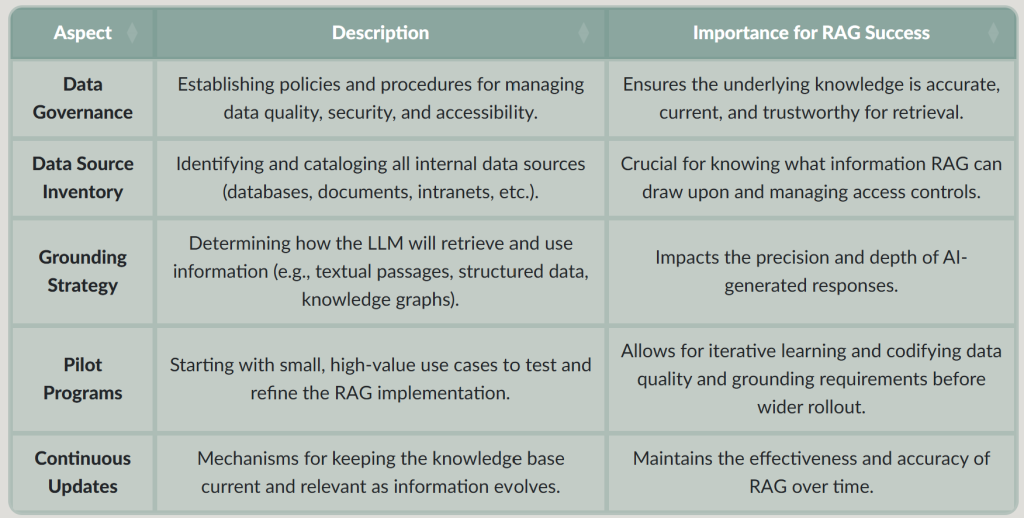

Successfully deploying RAG within an enterprise requires careful planning and a robust approach to data management. Key considerations include:

This table outlines critical considerations and their importance when implementing RAG within an enterprise, from data governance to pilot programs.

A Glimpse into RAG’s Architecture and Principles

Understanding the Underpinnings of Intelligent Retrieval

To fully appreciate RAG’s transformative power, it’s helpful to understand its fundamental architecture. At a high level, a RAG system typically involves:

- Knowledge Base: This is the repository of your organization’s proprietary data—documents, databases, wikis, etc. This information is often pre-processed and indexed (e.g., converted into numerical representations called embeddings) to facilitate efficient retrieval.

- Retriever: When a user submits a query, the retriever component searches the knowledge base for the most relevant pieces of information. It uses techniques like semantic search to find passages that are conceptually related to the query, not just exact keyword matches.

- Generator (LLM): The retrieved information, along with the original user query, is then fed to a Large Language Model. The LLM uses this augmented context to generate a comprehensive, accurate, and natural-sounding response. Critically, it is “grounded” in the retrieved information, reducing its tendency to “hallucinate” or provide generic answers.

This systematic approach ensures that AI responses are always backed by verifiable sources, making RAG an indispensable tool for enterprises where accuracy and trust are paramount.

This video, “Retrieval Augmented Generation (RAG): Boosting LLM Capabilities,” provides a concise overview of how RAG enhances LLMs by integrating external data, offering valuable insights into its operational mechanics.

The Path Forward: Embracing Unified Intelligence

RAG is more than just a technological upgrade; it represents a fundamental shift towards a more connected and intelligent enterprise. By effectively breaking down data silos, RAG creates a unified source of truth, enabling organizations to make smarter decisions faster, enhance operational efficiency, and drive innovation. As businesses continue to navigate an increasingly data-driven landscape, RAG is emerging as a strategic imperative for building resilient, adaptable, and truly intelligent systems.

Conclusion

Retrieval-Augmented Generation (RAG) is redefining enterprise knowledge management by seamlessly integrating the power of large language models with an organization’s specific, up-to-date data. This innovative approach offers a transformative solution to the long-standing challenge of data silos, fostering unified intelligence and empowering employees with accurate, relevant, and trustworthy information. As enterprises increasingly rely on AI for critical functions, RAG stands out as an essential technology for enhancing decision-making, boosting efficiency, and driving sustainable growth in an ever-evolving digital landscape. Its ability to ground AI responses in verifiable sources ensures accuracy and builds trust, making it a cornerstone for future-proof knowledge ecosystems.